KEYWORDS: Perspective Taking, Intentionality Understanding

Qingying Gao| Yijiang Li| Haiyun Lyu| Haoran Sun| Dezhi Luo| Hokin Deng

ICLR

Abstract

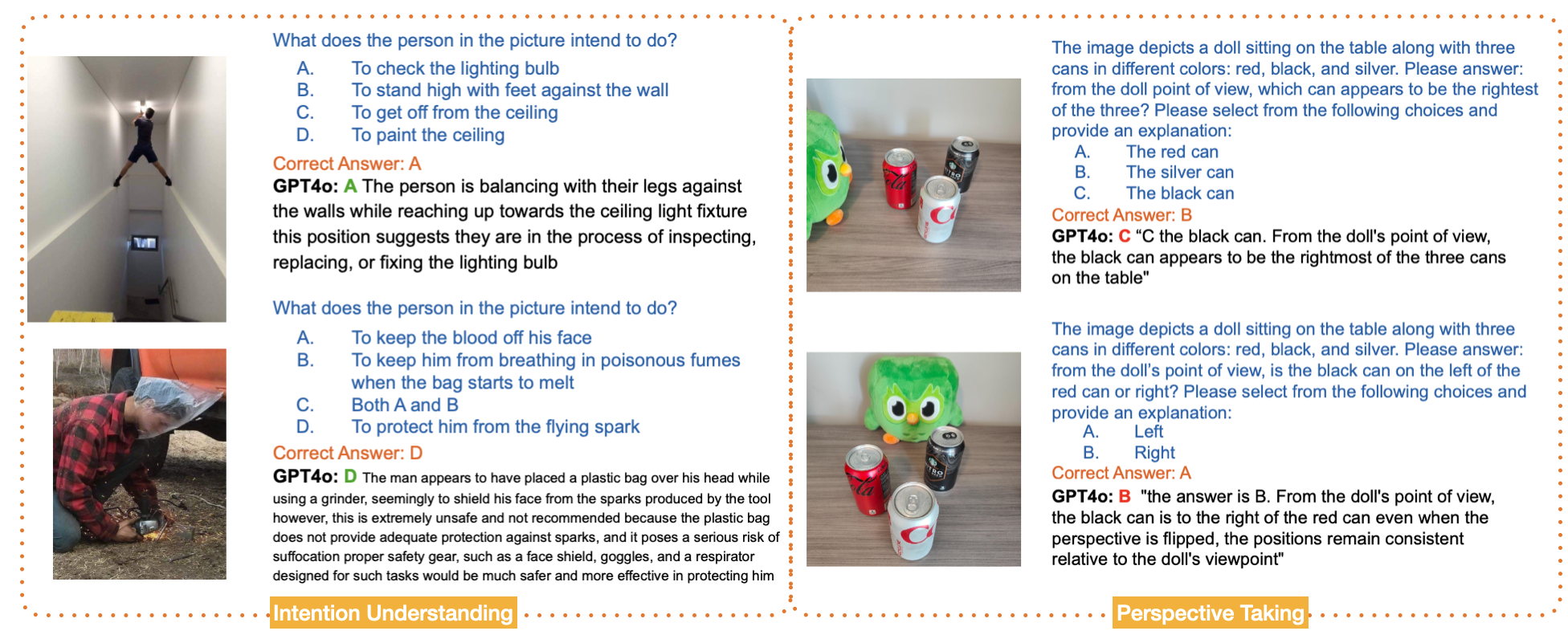

Knowing others’ intentions and taking others’ perspectives are two core components of human intelligence that are typically considered to be instantiations of theory-of-mind. Infiltrating machines with these abilities is an important step towards building human-level artificial intelligence. Recently, Li et al. built CogDevelop2K, a data-intensive cognitive experiment benchmark to assess the developmental trajectory of machine intelligence. Here, to investigate intentionality understanding and perspective-taking in Vision Language Models, we leverage the IntentBench and PerspectBench of CogDevelop2K, which contains over 300 cognitive experiments grounded in real-world scenarios and classic cognitive tasks, respectively. Surprisingly, we find VLMs achieving high performance on intentionality understanding but lower performance on perspective-taking. This challenges the common belief in cognitive science literature that perspective-taking at the corresponding modality is necessary for intentionality understanding.

@misc{gao2025visionlanguagemodelswant,

title={Vision Language Models See What You Want but not What You See},

author={Qingying Gao and Yijiang Li and Haiyun Lyu and Haoran Sun and Dezhi Luo and Hokin Deng},

year={2025},

eprint={2410.00324},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2410.00324},

}